Datasets Manual

Datasets are the images and movies that are projected onto Science On a Sphere®.

Who Is This For?

Permalink to Who Is This For?The Datasets Manual provides details of how datasets work with SOS and how you can create your own. Some of the information is useful only if you have a Science On a Sphere®, such as the sections on Real-time Datasets or the Visual Playlist Editor (which is only available on the SOS computer). Other details, such as the section on PIPs, is useful for anyone creating datasets, although you may find it easier to create PIPs with the Visual Playlist Editor.

For a basic introduction to content creation, please read the Content Creation Guidelines first. For information on presentation playlists, check the Presentation manual.

Definitions

Permalink to Definitions- Content

- General term that we use for anything that can be displayed on the sphere and should be stored somewhere in /shared/sos/media. Examples include movies, images, and caption files

- Dataset

- A packaged collection of coherent content, which may include multiple layers, labels, legends, colorbars, captions, and so on

- Texture

- A single static image on the sphere. By default, Science On a Sphere® turns on rotation for textures

- Time Series

- Animates through time and by default doesn’t rotate. Can be an image sequence or an MPEG4

- Image Sequence

- A directory of images that played in sequence

- MPEG4

- The only video format accepted by SOS

- Presentation Playlist

- A collection of datasets grouped together in a list for a presentation

- playlist.sos

- A text file that specifies how a dataset should be displayed on the sphere. Each dataset must have its own playlist.sos file

System Interactions with Datasets

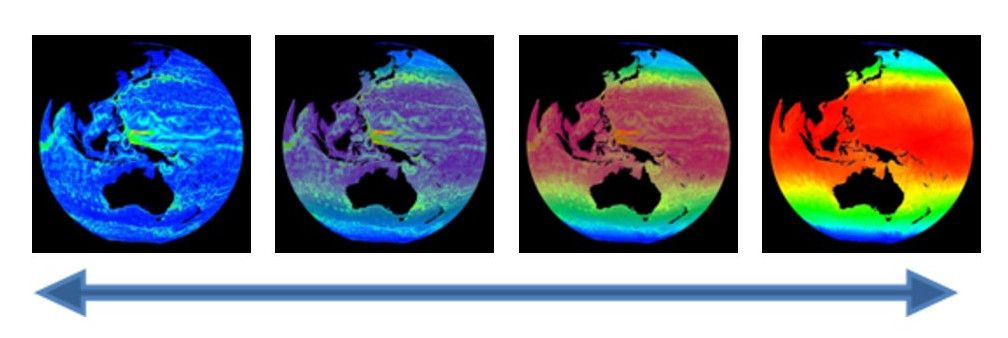

Permalink to System Interactions with DatasetsWhen a dataset is projected on the sphere, you are really looking at four images that have been merged together seamlessly around the sphere. The Science On a Sphere® software splits the images that you display into four disk images every time you load a new dataset on to the sphere. Because all of the work is done by the software automatically, you don’t need to do anything except point the system to where the data is located by creating a playlist.

Dataset Organization

Permalink to Dataset OrganizationScience On a Sphere® comes with over 550 datasets preloaded on the system. All of the NOAA-provided SOS datasets are put into one of the seven main categories: Air, Extras, Land, People, Snow and Ice, Space, and Water.

The “Extras” category contains assorted datasets that don’t fit into the other categories. Within each category there are many subcategories. Datasets can be put into multiple categories and subcategories. A full list of all of the datasets sorted into their categories is available in the Data Catalog.

This organization is used on the SOS website catalog, in the SOS Remote app, and in the SOS Stream GUI library. The datasets are stored on the SOS computer in directories that use an old naming scheme, so it’s similar, but not exactly the same. For example, the Air datasets are stored in a directory called atmosphere and the Space datasets are in a directory called astronomy. These old names were maintained for consistency for older sites.

Datasets on Disk

Permalink to Datasets on DiskAll the datasets are stored on the SOS computer in /shared/sos/media. The directories that you will find in here are:

- astronomy

- contains the Space datasets

- atmosphere

- contains the Air datasets

- database

- contains a file used by the SOS Remote app

- extras

- contains narrated movies that are now dispersed, plus Extras datasets

- land

- contains the Land datasets

- models

- contains models that are now dispersed in Air, Ocean, and Snow and Ice

- oceans

- contains the Water datasets

- overlays

- contains the overlay datasets

- playlists

- contains files used for downloading datasets

Within these category directories you will find a separate folder for each of the datasets. In some cases, related datasets are grouped together into subfolder. For example, in the land directory, there is a blue_marble directory that contains four subdirectories for the four Blue Marble datasets.

All datasets are stored in just one location, regardless of how many categories they are in on the website and SOS Remote App. For example, the Japan Earthquake, Tsunami Wave Propagation, and Wave Heights Combo dataset can be found in the Land and Water categories, but is stored only in the oceans directory. You can find a dataset’s location by click the FTP link on the description page on the website, by pressing the Details button in the SOS Stream GUI when the dataset is loaded, or by pressing the Info button on the SOS Remote App when the dataset is loaded.

All datasets created by the site have to go in the site-custom directory in /shared/sos/media. In the site-custom directory, we recommend that you create a folder for each individual dataset that you create, but we leave that up to each site. If you use the Visual Playlist Editor, it requires you to save each dataset into a folder of its own.

Playlists

Permalink to PlaylistsDifferences in Playlist Files

Permalink to Differences in Playlist FilesA point of confusion for many SOS users is the difference between a presentation playlist and a playlist.sos file. While all of the same attributes can be used in both, they serve two distinct purposes. A playlist.sos file can be thought of as a configuration file for a dataset. It contains the name, the path to the data to be displayed, and any other settings you wish. Each playlist.sos file should be stored with the content pieces it refers to (though this isn’t required) and should reference just one dataset. A presentation playlist groups multiple datasets into a list that can be used for a presentation. Presentation playlists have to end with the extension .sos and can be named anything as long as there are no spaces or special characters in the name. All presentation playlists should be stored in the sosrc directory in the home folder for each user. You can read more about presentation playlists in the Presentation Manual.

There are two places where you can modify a dataset: in your presentation playlist (such as weather_overview.sos) or in the playlist.sos file. When you modify a dataset in a presentation playlist, the changes will only apply in that specific playlist. If you modify a playlist.sos file, then every presentation playlist that points to thatplaylist.sos file will reflect those changes. The playlist.sos files that you create should be considered the master copy. Note: Changes made to playlist.sos files that are provided by NOAA will be overwritten every week when the sync with NOAA FTP server occurs. If you want to make changes to those playlist.sos files, first copy them into your site-custom folder.

Dataset playlist.sos Files

Permalink to Dataset playlist.sos FilesThere is a fairly strict format that must be followed within the playlist.sos file. Any specifications that are made in the playlist.sos will be default settings for how that dataset is displayed. Here is an example of what is contained in the playlist.sos file for the Blue Marble dataset:

name = Blue Marble data = 4096.jpg fps = 40 tiltx = 23.5 category = land catalog_url = http://sos.noaa.gov/Datasets/dataset.php?id=82 majorcategory = Land An example playlist.sos file for the "Blue Marble" dataset.

At a bare minimum, you have to include the name and data (or layerdata) attributes. Everything else is optional. For site-custom datasets created by the site, there are some attributes that don’t apply and also a few that are just for site-custom datasets. The playlist.sos files can be created with the Visual Playlist Editor or written by hand using a program like gedit or Notepad. For a complete listing of attributes available for the playlist.sos file, see the Playlist Reference Guide. The SOS software will ignore any lines that begin with a pound sign (#). This is a great way to temporarily ignore some attributes or to add comments.

Because all of the content pieces should be stored in the same folder as the playlist.sos file, it is not necessary to include the entire path to the files. You only need to include the data name. For example, to include labels all you need to type is label = labels.txt. If the data is stored in another location, then the path needs to be included. For example, label = /shared/sos/media/atmosphere/dataset/labels.txt.

There can be multiple playlist.sos files in one folder for different versions of the dataset. The file names simply need to start with playlist and end with .sos and there must be one file that is named playlist.sos. For example, you could have playlist.sos, playlist_with_audio.sos, and playlist_extra_labels.sos all in the same folder. If you don’t have a playlist.sos file then none of the variations will show up in the data catalog on the iPad.

The “include” lines used in presentation playlists should not be used in a playlist.sos file, since the purpose of the playlist.sos file is to describe a single self-contained dataset with optional layers, PIPs, etc. Only presentation playlists should use the include attribute.

Example playlist.sos

Permalink to Example playlist.sos

The contents of the “Blue Marble” dataset and its two playlist.sos files.

The “Blue Marble” dataset has two playlist.sos files in the blue_marble folder: playlist.sos and playlist_audio.sos. Both playlists point to the same data, and the only difference is that one includes audio and a timer and the other doesn’t. Notice that the audio files have been put into their own folder. If there are multiple audio files or PIPs, a folder can be created in the dataset folder that contains those files. While this isn’t required, it helps to keep the folder uncluttered.

When files that are referenced in the playlist.sos file aren’t in the same directory as the playlist.sos, the path to the file needs to be included. Take note in the playlist_audio.sos file how the audio points to audio/BlueMarble.mp3 since the mp3 file isn’t in the same directory as the playlist.sos. Either relative paths (audio/BlueMarble.mp3) or full paths (/shared/sos/media/land/blue_marble/blue_marble/audio/BlueMarble.mp3) can be used in the playlist.sos files. Be careful to avoid typos, as the dataset won’t work if anything is wrong!

Basic Playlist Options

Permalink to Basic Playlist OptionsYou can optimize how a dataset is displayed by understanding all of the attributes that are available to you in the playlist.sos files. You can do much more than simply display the dataset.

The Visual Playlist Editor can be used to create both presentation playlists and playlist.sos files and gives you the ability to set all the attributes that are available through an intuitive user interface. All of the attributes available for playlists can be found in the Playlist Format Reference.

Attributes for Texture Datasets

Permalink to Attributes for Texture DatasetsFor a texture dataset, there are only a few attributes that you need to consider. When a texture dataset is initially loaded on the sphere, you can set whether you want it to rotate immediately or only after play is pressed. The attribute animate in the playlist controls this. If animate is not included in the playlist, then the default is for the dataset to automatically start rotating. animate can be set to either 0 or 1. 0 will prevent the dataset from animating until play is pressed, and 1 will cause the dataset to start rotating immediately when loaded. For a texture, fps is used to define how fast the dataset will rotate, while for a time series, it defines the animation rate. Another common attribute used with textures is the tilt option. For instance, we have our Earth textures set to load at a 23.5° tilt to resemble the Earth’s actual tilt. This is also useful if you are loading a dataset that highlights the poles, which are hard to see if there is no tilt. To set the tilt, set tiltx, tilty, and tiltz to the number of degrees that you want each axis tilted. The tilt can be positive or negative.

Attributes for Time Series Datasets

Permalink to Attributes for Time Series DatasetsFor a time series, you have all of the attributes mentioned for the texture, plus many more. Rather than causing a dataset to rotate, animate causes a time series to start animating, but the functionality is the same. The default is for the dataset to start animating immediately. When a presentation is docent-led, it is often helpful to have the time series animate only after play has been pressed. This gives the docent time to provide background information about the dataset and explain what is going to happen. (In Autorun mode “animate” is automatically set to 1 regardless of what is in the playlist.) Another option is to set firstdwell, which is an amount of time that the system lingers on the first frame before animating. The default is zero seconds. The time is listed in milliseconds, so firstdwell = 4000 will dwell on the first frame for 4 seconds. You can also dwell on the last frame by setting lastdwell. When lastdwell is not set, the dataset loops continuously without pausing. Especially with model data, it is nice to set lastdwell so that the audience can get a good look at the last frame before the dataset loops again.

With particularly long datasets it’s sometimes nice to show only a piece of the dataset. You can do that by setting the startframe and endframe to the frame numbers that you want to start and end on. An example of when to use this would be if you just want to show a loop of Hurricane Katrina, not the entire 2005 season. You would use the 2005 Hurricane dataset, but set the startframe and endframe so that only the piece of the dataset when Hurricane Katrina was visible is shown. The endframe can be a negative number, which counts back from the end. Another way to shorten a dataset is to set the skip option, which allows you to set a skip factor. When skip is set to one, it skips every other image, and when it’s set two, it plays every third image.

To stop an animation, you can simply pause a dataset with the remote. But if you want to stop on an exact frame, then you should use stopframe in the playlist. This lets you set an exact frame that you want the animation to stop on and start animating again after you press play. This is a good feature to use with model data when you want to look at a particular year. To proceed past the frame that you stopped on, you must advance one frame and then press play.

Another option that you have for times series is to not only have them animating, but also rotating. For example, the default for the Indian Ocean Tsunami dataset is for the base image to stay stationary while the waves propagate across the ocean. This means that only the audience standing in front of the Indian Ocean can see the waves. When zrotationenable is set to 1, then the dataset will rotate about its z axis while it animates. You can also use zfps and zrotationangle to set the frames per second rate for the dataset and the angle at which the dataset rotates. Make sure that you set your zfps at a rate that allows your audience to still grasp what they are looking at before it rotates out of site. For especially busy animations, it could be distracting to the audience to see both the animation and the rotation.

Autorun Datasets

Permalink to Autorun DatasetsThere are also some functions in the playlist that should be specified when using Autorun. Autorun cycles through the datasets in a playlist automatically, showing each dataset for a specific amount of time. You can specify the amount of time each dataset is shown by setting timer to the number of seconds desired. If this is not specified, then each dataset is shown for 180 seconds. If timer is specified and you are not showing the playlist in Autorun mode, then timer will be ignored. It’s important to use timer when you also have accompanying audio tracks so that the dataset is shown for the length of the audio track. You will want to make sure that the audio is synced with the playlist. You can set audio for each dataset by specifying the desired track with the “audio” attribute. The audio tracks must be compatible with the Linux mplayer such as .mp3, .mp4, .wav, or .ogg. Audio tracks are available from NOAA for a limited number of datasets. They provide a good way to give your audience information when a docent is not available.

In order to restart a dataset, including the audio and any PIPs that have been added, the duration attribute needs to be set for the length of the dataset in seconds. If duration is not set, the dataset will loop indefinitely, but the audio and the pips will not loop.

Picture in a Picture

Permalink to Picture in a Picture

Picture in a Picture (PIP) allows you to display single pictures (any of the previously mentioned image formats works), an image sequence, or videos (MPEG4 only) on top of any dataset.

This feature can be used to display any image, but is commonly used to display colorbars, charts and graphs, logos, and other images that supply supplemental information. Images that you are going to use as PIPs can be stored in the dataset folder that they go with.

When used for a colorbar, a PIP can help label a dataset, as seen at right. It is not recommended to embed colorbars or other supplement imagery into the maps that you create. Leave them as additional image files that can be added in the playlist.sos file. This gives the user complete control over the position and size of the PIP and gives presenters the ability to turn them off on the fly using the SOS Remote app.

A PIP can also be used to provide a close-up view of a region or give the viewer additional context for what they are seeing. In the example at right, the underlying dataset shows the tracks of elephant seals in red, and the PIP is a picture of actual elephant seals. Multiple PIPs can be shown at the same time, or staggered to create a slideshow effect. Make sure to consider the placement of the PIP in order to not block information in the underlying dataset, especially if the PIP is displayed for an extended period of time.

By using PIPs that are PNG’s with a transparent background, many different shapes can be projected on the sphere with the underlying dataset as a background. PIPs can be set to display in specific locations on the sphere as markers, as seen at right. Here each pushpin is a PIP that identifies the location of a SOS installation.

Standard PIPs shouldn’t be any larger than 1024x1024 in resolution. Be aware that overlapping and warping can occur if the display size of a PIP is set too large. Make sure to test each dataset before distributing it to other sites, checking the PIP size, placement and timing. PIPs can also be MPEG4 files or image sequences.

Each PIP must be specified with the pip attribute. You can point to an image, time series, an image url (for example: http://example.com/image.jpg), or a live stream (for example: rtsp://server_name/stream_name.sdp). All of the following modifying PIP attributes must then be listed below that PIP. To add another PIP, simply add another line that starts with pip and then list the modifying attributes in the lines below it. You can add as many PIPs as you want.

PIP Style

Permalink to PIP StyleThere are three different styles for PIPs: projector, room, and globe. projector is the default, where the PIP is replicated four times and placed with the default position centered in front of each projector. As the imagery rotates, the PIP remains stationary in pipstyle = projector. A pipstyle of globe places one PIP on the globe, by default with a latitude and longitude of 0,0. As the sphere is tilted and rotated, this PIP moves with the globe. This allows you to use PIPs as geo-referenced markers. The center of the image is placed at the specified latitude and longitude. A pipstyle of room places one PIP on the globe, by default with a latitude and longitude of 0,0. As the sphere is tilted and rotated, this pip remains stationary relative to the room, with the sphere data sliding underneath it. See Orientation of Data to figure out where 0,0 is set in your room.

PIP Timing

Permalink to PIP TimingThe piptimer attribute has to be set (in seconds) so that the system knows how long to display the PIP. If the piptimer attribute is set to 0, then the PIP will be displayed for the duration of the dataset, which is the default. You can delay the appearance of a PIP by using pipdelay, which is in seconds. Rather than having the PIPs appear abruptly, you can use the pipfadein and pipfadeout to fade the PIP in and out in a specified number of seconds. The time to fade in and out a PIP is included from the total amount of time allotted for the piptimer. By default, a series of PIPs will play through only once. You can set duration to a given number of seconds to restart the underlying dataset and the PIPs.

PIP Size

Permalink to PIP SizeIn order for the PIP to be an appropriate size for the sphere and in the proper proportions, you have to set the pipwidth and pipheight. The width and height are measured in degrees latitude and longitude. If you set just the height or the width, the software will automatically scale the image. If you are using pipstyle = projector you won’t want to make your PIP more than 90 degrees wide because the PIP appears four times (once for each projector) and it will start to overlap. In addition to the PIP size, you will also need to determine where you want it displayed on the sphere. If nothing is specified, then the PIP will appear in the middle of each of the projector views. To adjust the position of the PIP, use pipvertical and piphorizontal. Both of these are in degrees. pipvertical is the vertical position of the image relative to the equator, with positive degrees above the equator. Be careful as you move the PIP up and down with pipvertical because the image follows the lines of longitude and becomes warped at the poles. The horizontal position is relative to the center of the projector, with positive degrees east of the project.

An alternative to using pipvertical and piphorizontal is to use pipcoords, which is set in degrees latitude and longitude. The benefit of using pipcoords is that there is no warping of the images, even near the poles. pipcoords is also used with pipstyle = room and globe to position the PIP.

In the first image, the NOAA logo was added as a PIP and positioned using pipcoords = 30,0; notice how the logo maintained it's shape. In the second image, the NOAA logo PIP was positioned with pipvertical = 30; notice how the logo appears pinched at the top, where it is warped along the lines of longitude.

In the first image, the colorbar PIP was positioned using pipcoords = -35,0; notice how the bar appears curved horizontally, but maintains straight vertical lines on the left and right edges of the bar. In the second image, the colorbar was positioned using pipvertical = -35; in this case the horizontal lines remain straight, but the vertical lines are both angled towards the south pole.

When a PIP is an mp4 file, the default playback speed is the frame rate of the dataset on which it is overlaid. If you want to control the frame rate of the PIP, then use pipfps to set a new frame rate. The final option to set with a PIP is pipalpha, which lets you adjust the transparency. If not specified, the pip shows up opaque. If you don’t want your pip to completely block the underlying image you can adjust the opacity of the image from 0, which is completely transparent to 1, which is completely opaque.

Text PIP

Permalink to Text PIPA Text PIP is a special kind of PIP that displays text only. A Text PIP has all the same attribute specifications as a normal PIP, such as width, opacity, and fadein time. Rather than using an image editing program to create text and save it as an image file for a PIP, you can use the SOS Visual Playlist Editor and enter text directly into the PIP Text Editor and save the Text PIP to your SOS dataset. A Text PIP gets written to an html file. You should only use the PIP Text Editor to create and edit Text PIP files, and you should not create or edit it by hand.

An example of a text PIP showing the same text in English, Spanish, Korean, and Mandarin Chinese.

Moving PIP

Permalink to Moving PIPEach type of PIP (image, image directory, movie, text) can be given a simple path file that contains coordinate locations that indicate how to automatically move the PIP on the sphere as the dataset is animating. The path file is a simple comma separated value file (.csv file format) that contains a list of increasing frame numbers, each with a latitude and longitude value. As the dataset is animating, the PIP will be moved to the location specified in the file that corresponds to the current frame being displayed on SOSOption to render a line path that follows the moving PIP is provided and is turned ON by default. The moving PIP feature makes it easy to show animal migrations (example below shows leatherback sea turtle track), hurricane tracks, etc. on SOS without having to render a moving object into the underlying global movie or image data. Use the SOS Visual Playlist Editor’s PIP Path Editor to associate a csv file with your PIP. For a full example on how to create a Moving PIP, including detailed documentation for creating the csv path file, please see the Moving PIPs How-to.

An example of a moving pip

Shared PIP

Permalink to Shared PIPA Shared PIP is a special PIP that can display continuously over multiple clips in a playlist or between playlists. The Shared PIP stays active until you explicitly stop it. In its current implementation, a Shared PIP supports Live Video PIPs as described in the next section and static PIP images.

A Shared PIP is set up through the Shared PIP dialog box, located in the SOS Stream GUI’s Utilities menu. In the Shared PIP dialog box that pops up, you specify a Live Video PIP in the same way as detailed in the section below. For a static PIP image, you can click the Browse button to locate the image on your SOS computer. The following PIP attributes work with a Shared PIP: pip, pipstyle, pipwidth, pipheight, pipcoords, piphorizontal, pipvertical, pipalpha.

Shared PIP dialog window from the SOS Stream GUI

Once you press Start, the PIP will show up on the sphere, and will remain active even if you switch to a different clip. Press Stop to delete the Shared PIP.

Live Video PIP

Permalink to Live Video PIPA Live Video PIP is a PIP that contains a video that is streaming either from a webcam connected to a local SOS computer, or from an RTSP stream. RTSP (real time streaming protocol) is an application-level protocol that controls the delivery of a real-time data stream, such as live audio and video. This feature may be useful if a site wants to show a real-time video feed of a remote presenter onto their sphere for a particular in-house presentation.

Incorporating in a Playlist

Permalink to Incorporating in a PlaylistA Live Video PIP is specified in a presentation playlist file (or in a clip’s playlist.sos file) similar to how a normal PIP is specified. To define a Live Video PIP, simply set the value of the pip entry in your playlist file to an RTSP URL: pip = rtsp://server_name/file.sdp. In this example, “server_name/file.sdp” would need to be replaced by the actual name of the remote presenter’s RTSP stream.

include = /shared/sos/media/oceans/japan_tsunami_waves/playlist.sos rename = Japan Tsunami with Live Presenter pip = rtsp://server_name/file.sdp pipstyle = room pipcoords = 0,135 pipwidth = 65 To specify a live video pip, set the value of pip in your playlist file to an RTSP URL.

If you are using a webcam attached to your SOS computer, simply type webcam for the pip attribute, as in: pip = webcam.

The following PIP attributes work with a Live Video PIP: pip, pipstyle, pipwidth, pipheight, pipcoords, piphorizontal, pipvertical, pipalpha.

Once you select this clip via the iPad or SOS Stream GUI, the live stream should pop up on your sphere as a normal pip does (note, however, it may take a few extra seconds to a minute for the stream to show up on the sphere, depending on network speed etc.).

Requirements

Permalink to Requirements- An RTSP source to broadcast the remote presenter: There are various live video streaming solutions available. Currently, we use Apple’s streaming QuickTime technology with the freely available QuickTime Broadcaster. Other streaming technologies that support RTSP may be used

- A reasonably high-speed internet connection is required to send/receive a live video feed. We recommend a dedicated bandwidth of at least 1.5 MBits/sec, though a higher 3-4 MBits/sec is preferred

Limitations

Permalink to Limitations- The webcam currently does not support audio, and may exhibit a delay in frame rate overtime

- Although RTSP supports both live data feeds and stored video/audio clips, in our current implementation, only live data feeds are supported for display in a PIP

- If the live stream is stopped by the host while a Live Video PIP is being shown on the sphere, SOS Stream GUI will hang for about two minutes, and then it will resume normal activity. So, the best thing to do if you notice a live video stream is no longer working on the sphere is to wait for at least two minutes before using any controls on the iPad or SOS Stream GUI, otherwise, you may have to manually stop SOS and restart it

Annotation Icons

Permalink to Annotation Icons

The SOS Remote app, through the annotation feature, gives presenters the ability to draw on the sphere and place icons on the sphere. The SOS Remote app comes with a set of default icons, or you can create custom icons for your site.

If you would like to create your own icons, use a transparent PNG with a minimum resolution of 256x256, as shown here. Custom icons can either be attached to specific datasets, or made available for all datasets in the default icon library.

Dataset Specific Icons

Permalink to Dataset Specific IconsTo add an icon to your dataset’s playlist.sos file so that it shows up in the Icons dialog when you load the dataset, simply add an icons = value attribute/value pair to the dataset’s playlist.sos file and place the icon in the dataset directory. Note that you can specify more than one icon by making a comma separated list with no spaces.

name = Blue Marble (23 degree tilt) data = 4096.jpg category = land icons = satellite.png,rocket.png In this example, two icons have been added to the Blue Marble playlist.sos file: satellite.png and rocket.png.

In this case, the icon files should be placed in the same directory as the playlist.sos file. In other words, use relative paths when specifying the icons in the playlist.sos file. Once you load the dataset on SOS and then open the Icons dialog, the two icons you added will appear at the top of the list of available icons.

Another way to specify an icon is via the presentation playlist located in the sosrc directory. You specify the icons = value attribute/value pair for a clip here as well, however, the pathname of the icon file must be specified relative to the location of the clip’s playlist.sos file.

# Loggerhead Sea Turtle Tracks include = /shared/sos/media/oceans/LoggerheadSeaTurtleTracks/playlist.sos icons = ../../site-custom/turtle.png Paths to icons are relative to the location of the playlist.sos file. This example shows how to add an icon that's in your site-custom folder relative to the location of the Loggerhead Sea Turtle dataset's playlist file.

General Icons

Permalink to General IconsFinally, if you have a general set of icons that you create and that your site may use often, you can add these icons to the default icon library so that they are automatically available with every dataset. To do this, simply add your icons to the directory /shared/sos/etc/AnnotationIcons/.

Layers & Overlays

Permalink to Layers & OverlaysLayers and overlays afford presenters the ability show and hide information on the sphere as needed.

Layers

Permalink to LayersThe layering capability in SOS allows presenters to dynamically turn layers on and off. A multi-layer display can be created either statically in the dataset playlist beforehand, or interactively using the SOS Remote. By using the Display Elements list in SOS Remote, the user can toggle individual layers on and off, adjust the level of transparency of each layer, or delete a layer. Any labels/captions or PIPs associated with a clip are now also listed individually in the Display Elements list. These can be interactively manipulated like any other layer.

Pre-defined Layers

Permalink to Pre-defined LayersA multi-layer dataset may be defined by using the layer attribute. Each use of a layer = name attribute/value pair within a playlist.sos file defines a new layer and specifies the name of the layer. The specified name of the layer is used to identify it in the layer table in SOS Remote’s Layers tab. Each new layer specified appears visually on top of any previous layers.

The layerdata attribute is repeated for each layer to specify the corresponding data file for the layer. A layer defined this way may have a layervisible = no attribute/value pair defined to specify that the layer is not initially visible. A layer may also have a layeralpha attribute pair to further specify the initial opacity of the layer. An alpha value of 0.0 means that the layer is totally transparent, and 1.0 means the layer is totally opaque. A slider in the SOS Remote interface is available to interactively manipulate the opacity of each layer.

Orienting Layers

Permalink to Orienting LayersIn order for layers to overlap properly, it is important to make sure that the maps are oriented identically. In the case where two layers have different center points, you can set layereast, layerwest, layernorth, and layersouth. These commands specify the geographic extent of the data within the layer. They specify the east and west edges of the data in degrees east longitude, and the north and south edges in degrees north latitude.

Overlays

Permalink to OverlaysAdditionally, we have created new overlays which are located in the /shared/sos/media/overlays directory, and which will show up as a library category on SOS Stream GUI and on the iPad. You will find them accessible through a button on the presentation tab of the iPad. The overlays contain useful earth-related transparent layers (specified as clips in a standard playlist.sos file format) that can be used for both pre-programmed layering, as well as, interactive layering. An example of a layer that will be in this category is Country Borders. If a site wants to add more overlays for general use, they should be placed in the site-custom folder with a playlist.sos file that has the category defined as overlay. Examples of playlist.sos files for overlays can be found in the /shared/sos/media/overlays directory.

You can have your custom overlays appear in the Overlays dialog of the iPad app just as the NOAA-managed overlays appear for quick and dynamic layering. To do this:

- In your playlist.sos file, add the following attribute/value pair (this is optional and allows your overlay to show up in the overlays category in SOS Stream GUI’s Library menu):

category = overlays - In your playlist.sos file, add the following attribute/value pair (this is what makes your overlay appear in the iPad app’s Overlays dialog):

subcategory = Overlays - On the SOS Computer’s SOS Stream GUI application, select LibraryUpdate Library menu option to update the Data Catalog with your new overlay dataset. Once this is complete, on the iPad app’s Settings tab, select the Update Now button, and your overlay dataset will appear in the Overlays dialog of the iPad

Real-time Datasets

Permalink to Real-time DatasetsThere is a collection of over 40 real-time datasets that are provided by NOAA. Because these datasets tend to be quite large and internet speeds vary from site to site, the SOS software can be modified to adjust the number of real-time datasets downloaded.

Typically, sites are set to download real-time data either every hour or every three hours. The frequency of the downloads and the amount of real-time datasets downloaded can be adjusted for each site. In /shared/sos/media/playlists are various real-time dataset playlists that vary from just a few datasets to all of the real-time datasets. You can also create your own playlist of the real-time datasets that your site is interested in using. A crontab is then used to keep all of the real-time datasets in your playlist up to date.

Alternative Formats

Permalink to Alternative FormatsIn addition to image and movie files, Science On a Sphere® has limited support for KML and WMS data sources.

Keyhole Markup Language (KML)

Permalink to Keyhole Markup Language (KML)SOS supports Keyhole Markup Language (KML) data in addition to the previously existing movie and image formats. KML is a popular specification and actively used with Google Earth for displaying data on a sphere. The initial SOS KML capability supports a limited set of the entire specification, which includes many of the commonly used KML features you would typically display in Google Earth.

Implementation Notes

Permalink to Implementation NotesTypically, KML files are used with Google Earth which allows users to display information on a virtual sphere similar to SOS. There are a couple of differences to be aware of. Google Earth has additional space around the sphere where legends, icons, or other meta information can be displayed. SOS has only the sphere for displaying data. By default, all ancillary information is displayed at point 0° North, 180° East. Each subsequent piece is staggered from this starting point. This is user configurable. Within the playlist, use kmllegendxoffset and kmllegendyoffset to specify a new location.

KML Placemarks or Icons referenced in KML may appear small on the sphere. Additional playlist parameters have been included to scale icon’s to make them more visible on the sphere. Use kmlplacemarkscale to scale these features if necessary.

More information on these commands can be found in the Playlist Reference Guide.

Special Notes for KML

Permalink to Special Notes for KMLOften, KML files reference remote data via a web address. KML files of this nature require SOS to have access to the internet to retrieve these files. Depending upon your network connectivity and the number of external links referenced in the KML file, the initial load may take some time. SOS will perform local caching of downloaded files and subsequent loads will perform faster.

It is strongly recommended to test KML files prior to any presentation to insure data is cached locally and the presentation is not delayed by waiting for remote files to be retrieved. When an SOS playlist references a KML dataset, SOS will parse the file and store any temporary or cache information in the system temporary directory. The default is /tmp on SOS systems.

Limitations for KML

Permalink to Limitations for KMLThe SOS software does not support the entire KML specification. Here is a list of major items not currently supported in this release: Tours, Fly To, Features with 3 Dimensions, Resource Map, Model’s, Regions. If KML data or KML data isn’t displaying correctly, please contact the SOS support team at [email protected] and include the problem KML file in your message.

You cannot have multiple KML layers defined within a single playlist item because we do not support time matching capabilities between various KML files. Future versions should allow multiple static KML files.

Web Mapping Service (WMS)

Permalink to Web Mapping Service (WMS)SOS supports loading imagery directly from the Open GeoSpatial Consortium (OGC) Web Mapping Service (WMS). This feature requires an internet connection and will not work unless the SOS system has access to the internet and the referenced WMS Server.

A WMS provides a service allowing users to request data through URLs using specific key value pairs defining terms such as the width, height, image type, etc.… A unique feature of the WMS standard allows users to request subsets of imagery by defining a bounding box using a lower left and upper right latitude and longitude coordinates. The combination of these features allows users to host very large high resolution imagery and users can request smaller versions or subsets of the original imagery. SOS takes advantage of this functionality through the magnifying glass, allowing users to see more detail as you increase the zoom level on the sphere.

https://www.gebco.net/data_and_products/gebco_web_services/web_map_service/mapserv?request=getmap&service=wms&BBOX=-90,-180,90,360&crs=EPSG:4326&format=image/jpeg&layers=gebco_latest&width=1200&height=600&version=1.3.0A typical WMS URL.

To use a WMS URL with SOS, you set it as the value for the layerdata attribute.

#WMS Data Example layerdata = //WMS//https://www.gebco.net/data_and_products/gebco_web_services/web_map_service/mapserv?Service=WMS&WMS=worldmap&Version=1.1.0&Request=GetMap&BBox=,,,&SRS=EPSG:4326&Width=&Height=&Layers=gebco_latest&Format=image/png Four things have changed: //WMS//, Height=<PIXELHEIGHT>, Width=<PIXELWIDTH>, BBox=<WEST>,<SOUTH>,<EAST>,<NORTH>. All 4 items are required in order for WMS data to work correctly with SOS. The first item indicates the following path to data is a dynamic WMS URL. The last 3 are placeholders for dynamic fields that change while in use for SOS. For each WMS URL used within SOS, these values must be replaced exactly as above. SOS will automatically replace these values when loading the data.

Also to note is that the CRS= value should either be equal to CRS:84 or EPSG:4326. If CRS= is not working, try SRS= as in the example above.

See the Web Map Service (WMS) Tutorial for more information on using WMS in SOS.

Special Notes for WMS

Permalink to Special Notes for WMSAll WMS URL’s are remote data and require SOS to have access to the internet to retrieve the corresponding imagery. Depending upon your network connectivity and the performance of the remote WMS server, the initial load may take some time. SOS will perform local caching of downloaded files and subsequent loading will perform faster.

It is strongly recommended to test WMS Playlists prior to any presentation to insure data is cached locally and the presentation is not delayed by waiting for remote files to be retrieved.

When SOS playlist references a WMS dataset, SOS will retrieve and store any temporary zoom files or cache information in the system temporary directory. The default is /tmp on SOS Systems. If you try out your WMS dataset and you get a loading error, you’ll need to go into the /tmp directory and delete the directory corresponding to the URL you put in your layerdata field and then make modifications to your layerdata value and then try again.

Limitations for WMS

Permalink to Limitations for WMSWith the SOS magnifying glass enabled, SOS will determine a bounding box for the area currently under view and dynamically retrieve and load that image. A bounding box cannot be determined around either the North or South Pole. The nearest image that does not cross the pole will be used.

Visual Playlist Editor

Permalink to Visual Playlist EditorThe Visual Playlist Editor (VPLE) allows you to easily construct new datasets for your system. You simply add the layers, PIPs, title, and other settings that you want and when you save the dataset, everything you referenced is saved into a single folder along with an automatically generated playlist.sos file.

When creating a new dataset, make sure to specify only a subcategory. The major category will automatically be site-custom. If you forget to specify a subcategory for a dataset, then it will be put into an uncategorized subcategory in the site-custom category. You may also wish to include keywords and creator for your site-custom dataset, which will also be added to the SOS Data Catalog entry for your dataset as well. You can also add a description to your playlist.sos file that will show up on the iPad and the SOS kiosk.

When you use the VPLE to modify datasets in your presentation playlist, changes you make to the datasets will affect only the presentation playlist that you are editing and not the underlying playlist.sos files for each dataset. The playlist.sos file is the master copy of how the dataset is displayed and should not be edited in any of the NOAA-supplied datasets (the VPLE prevents that, by default). If you make changes in the playlist.sos files, the changes will appear in everyone’s playlists and weekly NOAA dataset updates may overwrite your changes.